Bots are usually viewed as robots designed to replace humans in the processes of business communication, information transfer, or creating an impression of customer service.

Currently, they very often appear in a negative context due to incompetently carried out automation of “marketing / sales processes.” Most of us associate bots with intrusive phone calls, with a sole purpose of obtaining our data while not responding to our protests and refusals. Such activities contribute to the creation of a new category of spam – in an interactive version, as well as building negative associations regarding the use of bots.

However, these advanced, automated solutions, when properly applied, can be useful, providing us with the possibility of effective interaction with systems through only a voice interface, relieving us of repetitive elements of interactions (for example, when collecting basic data in customer service). This technology has been in intensive development recently and brings many promising improvements.

Where did the bots come from?

How did we get to the current stage of development of these systems? The fact is that the beginning and one of the original directions of the development of bots was the desire to pass the Turing test. A little explanation should be given here. The Turing test (according to Wikipedia) is a way of determining the ability of a machine to use natural language and indirectly to prove that it has mastered the ability to think in a way similar to human. In 1950, Alan Turing proposed this test as part of his research into the creation of artificial intelligence (AI) – a replacement of loaded with emotions and, in his view, pointless question Do machines think? for a better defined question.

Passing this test has long been the goal of many research teams and has fueled work on artificial intelligence and ‘rule-based’ systems. Today, when we know how to build a program that will pass such a test without any problems, we know much more in the context of artificial intelligence and the differences between conversational systems and the actual “artificial intelligence.” We also know that building a system capable of convincing a person in a blind test that he is talking to a person does not actually create “intelligence.”

So let’s look at bots in the context of the function they are supposed to perform – namely another interface in the human-machine relationship.

The development of today’s electronics and cloud solutions means that we can use natural language as part of solutions that often surround us in everyday life. Voice assistants (Google Now, Siri, Cortana, Alexa, etc.) allows us to interact with home and entertainment management systems, as well as improve the use of web resources. Of course, these solutions can be complex AI-based systems as well as simple rule-based mechanisms.

How does the bot work?

What are bots really? They introduce us to the world of interaction that is difficult to use, although it is close to the natural way of voice communication. This new type of interface requires from the user some adaptation to the basic assumptions related to its use.

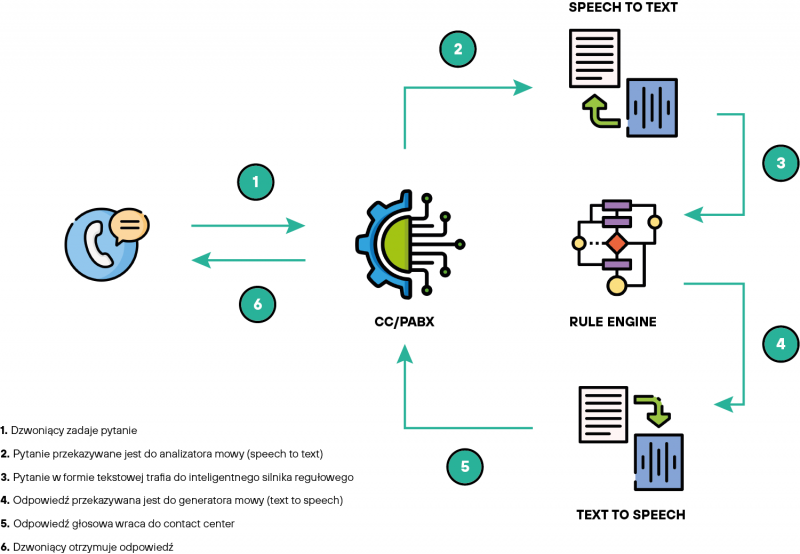

Let’s start with the structure of the solution. To put it simply, it is based on natural language processing (NLP). Of course, if we want the bot to have a voice interface, we need components responsible for speech-to-text processing (STT / ASR) and the implementation of the reverse function – i.e. text-to-speech (TTS). The entire decoded text can go to the aforementioned NLP module, and then to the appropriate conversational engine, which is responsible for implementing the flow of interaction and its proper effectiveness. Efficiency is the key here, because like any interface, also the one used in bots is built to allow us to implement specific interactions planned by the designer.

We also need to understand certain limitations of the system we are working with, as well as the requirements of the systems with which we are integrated. As you can see, our sophisticated interface has to serve so purpose. Typically, in business applications, the bots are intended for ticket systems and CRM solutions. So we have a specific tool and goal. What remains, therefore, to build a functional and user-friendly voice interface.

How to build a good bot?

- Define its use (purpose) – just as we build a graphic menu and do not put everything on one screen and we apply patterns to which the user is used to, we also need to take care of the right UX (User Experience). We should prepare a list of the systems and data used and collected through our interactions, and identify the types of these interactions.

- Plan the course of the conversation (flow) at a high level – how many branches and on how many levels. Here it is important that the user is guided in a natural and intuitive way in the fewest possible steps. Plan and test the effectiveness of individual options.

- Take care of data collection and work with it – the systems collect a lot of data regarding interaction with the user and their effectiveness. The key is to use this data properly to build an even better flow. The collected data also allows to diagnose shortcomings and errors in our assumptions.

- Take into account the technical specifics of the systems you work with – different ASR systems and conversational engines have their strengths and weaknesses, use them properly.

- Remember who you are building the solution for – think about who your clients and users are. A good definition of the target group will allow you to plan the solution in a better way. If the user does not want to cooperate or does not know how to use the solution – the system will not help him.

- Do not forget about the important issue of “leading” the user – only the youngest generation meets voice systems in their homes every day and learns how to conduct an effective conversation. Many of today’s users are just getting to know the patterns of using such an interface – it is important to guide them properly, showing the possibilities and how to effectively interact with a new type of system.

- Remember that the bot will complement your infrastructure – it is important that in terms of operation, language and functions, it should be consistent with the way you communicate with the client.

How to communicate with a bot??

From the perspective of the user of the voice interface, it is important to remember that bots are designed with a specific purpose in mind, such as booking a medical appointment. A well-designed system will try to guide us by informing about the next steps / options. The bot works in sequences, searches for specific data at each stage / step, which is why it is not able to handle information that this particular stage / step does not expect. In order to conveniently use this form of communication, we must pay attention to what information we have been asked for. When he asks for a name – he expects this data, any additional messages make it difficult to catch that specific information. The quality of our voice is also extremely important – all background noise and interference effectively hinder the operation of speech recognition algorithms, so it is crucial that communication is conducted in good conditions.

In summary, new conversational systems (bots) provide many opportunities to improve our work and extend the functionality of existing solutions. However, it should be remembered that their proper implementation requires knowledge and experience resulting from both the knowledge of technology and care for customer service standards. We build all solutions for people and we always put them in the center. Nowadays, the customer / user demands the possibility of self-service and 24/7 access to information, so often through only using modern conversational systems will we be able to meet the growing expectations of our customers.